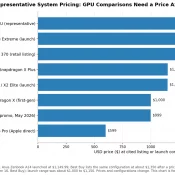

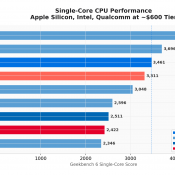

MacBook Neo Deep Dive: Benchmarks, Wafer Economics, and the 8GB Gamble

Update, May 26th and May 14th, 2026 with additional CPU benchmarks and GPU benchmarks. The short version is unchanged: the MacBook Neo is VERY fast for bursty everyday use but fairly limited for sustained, memory-heavy, or pro workloads. If you don’t have an interest in the computer industry and its history, you can probably skip everything else below. Otherwise, read on! Availability Heads Up (late May) Due to high demand, Apple has been periodically backordered on MacBook Neos lately, but